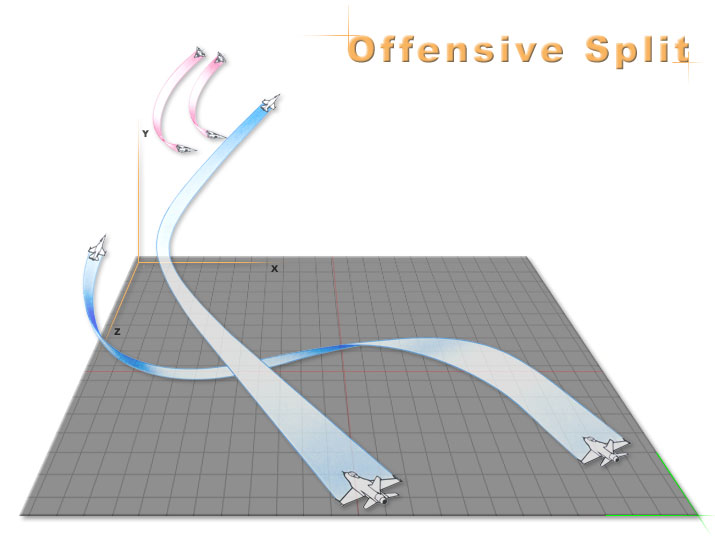

The superior performance of the algorithm is verified by comparison with different algorithms in the test environment, and the effectiveness of the decision method is verified by simulation of air combat tasks with different difficulty and attack modes. Focusing on the problem of insufficient exploration ability of Ornstein–Uhlenbeck (OU) exploration strategy in the deep deterministic policy gradient (DDPG) algorithm, a heuristic DDPG algorithm was proposed by introducing heuristic exploration strategy, and then a UCAV air combat maneuver decision method based on a heuristic DDPG algorithm is proposed. The UCAV platform model of continuous action space was established. In this paper, the UCAV maneuver decision problem in continuous action space is studied based on the deep reinforcement learning strategy optimization method. It has become an inevitable trend in the development of future air combat battlefields that UCAVs complete air combat tasks independently to acquire air superiority. With the rapid development of unmanned combat aerial vehicle (UCAV)-related technologies, UCAVs are playing an increasingly important role in military operations. The results show that the algorithm is suitable for UAV swarms of different scales. Finally, the effectiveness of the proposed algorithm is validated by multi-scene simulations. Then, a swarm air combat algorithm based on deep deterministic policy gradient strategy (DDPG) is proposed for online strategy training. Second, a two-stage maneuver strategy based on air combat principles is designed which include inter-vehicle collaboration and target-vehicle confrontation. First, based on the process of air combat and the constraints of the swarm, the motion model of UAV and the multi-to-one air combat model are established. In this paper, an autonomous maneuver strategy of UAV swarms in beyond visual range air combat based on reinforcement learning is proposed. The key to empower the UAVs with such capability is the autonomous maneuver decision making. Unmanned aerial vehicles (UAVs) have been found significantly important in the air combats, where intelligent and swarms of UAVs will be able to tackle with the tasks of high complexity and dynamics. The relevant experiments have demonstrated that the proposed model can effectively improve the prediction accuracy and convergence rate in the prediction of maneuver control variables. 3) the model takes the maneuver control variables as the output to control the maneuver, making the maneuver process more flexible.

#Air combat maneuvers series

2) using stacked sparse auto-encoder network to reduce the dimension of time series data to predict the result more accurately. This model features: 1) time series data is used as the basi of decision-making, which is more in line with the actual decision-making process. Series combat-related data after dimensionality reduction.

The model consists of stacked sparse auto-encoder network for dimensionality reduction of high-dimensional, dynamic time series combat-related data and long short term memory network for capturing the quantitative relationship between maneuver control variables and the time In this paper, a hybrid deep learning network-based model is proposed and implemented for maneuver decision-making in an air combat environment.

The efficiency of the heuristic Q-Network method and effectiveness of the air combat maneuver strategy are verified by simulation experiments. Through continuous interaction with the environment, self-learning of the air combat maneuver strategy is realized. Aiming at the over-the-horizon air combat maneuver decision problem, the heuristic Q-Network method is adopted to train the neural network model in the over-the-horizon air combat training environment.

At the same time, heuristic exploration and random exploration are combined. In order to improve the efficiency of the reinforcement learning algorithm for the exploration of strategy space, this paper proposes a heuristic Q-Network method that integrates expert experience, and uses expert experience as a heuristic signal to guide the search process. Based on the characteristics of over-the-horizon air combat, this paper constructs a super-horizon air combat training environment, which includes aircraft model modeling, air combat scene design, enemy aircraft strategy design, and reward and punishment signal design. With the development of information technology, the degree of intelligence in air combat is increasing, and the demand for automated intelligent decision-making systems is becoming more intense.